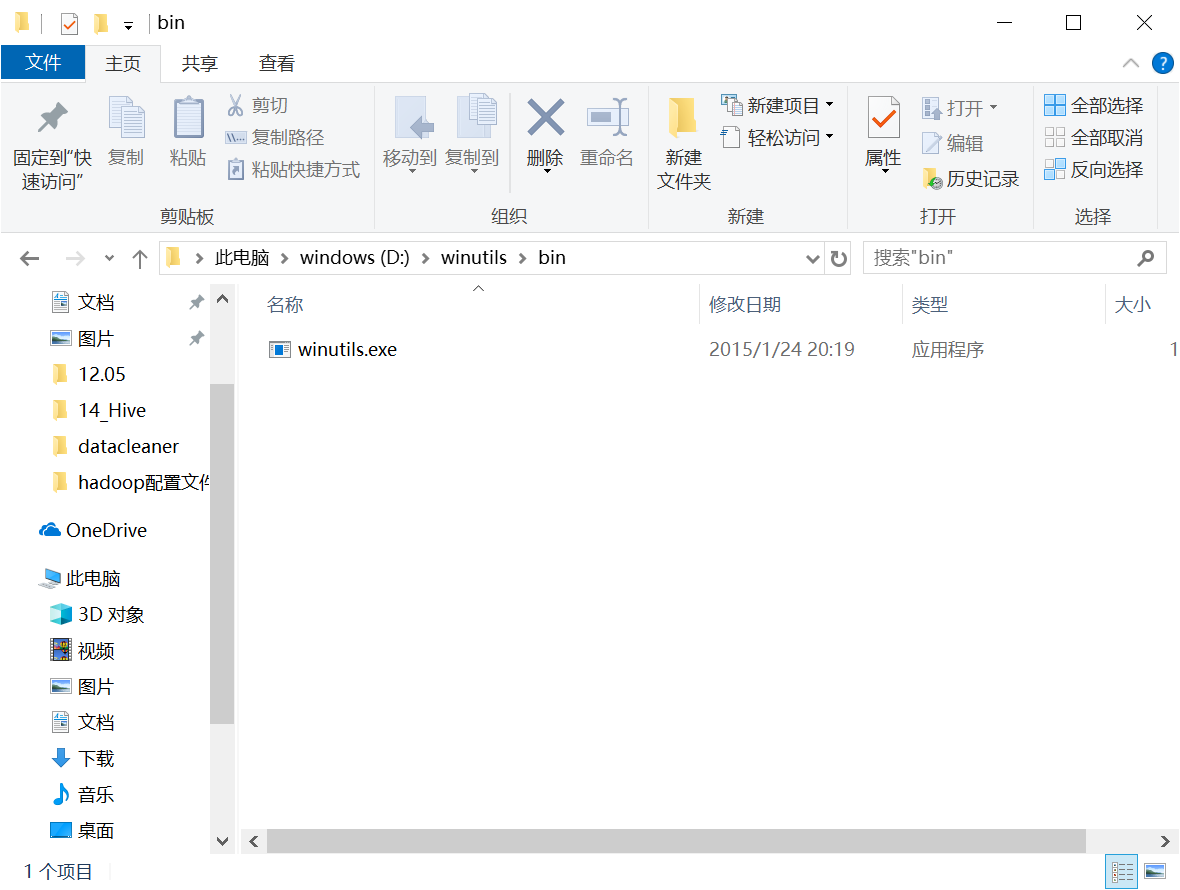

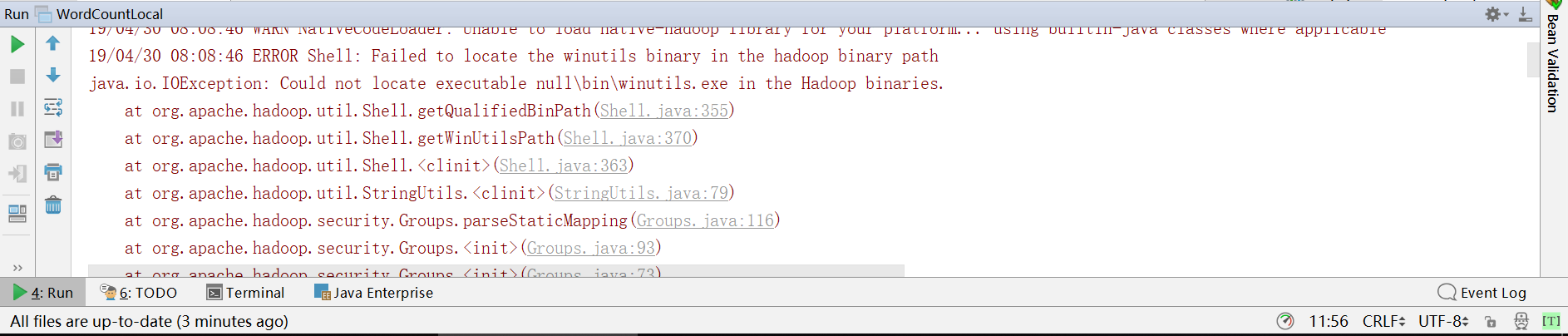

Map-Reduce is a yarn-based system for parallel processing of large data sets.**_. _**Yarn is a framework for job scheduling and cluster resource management. _**HDFS is a distributed file system that provides high-throughput access to application data.**_ In `etc/hadoop/core-site.xml` file enter HDFS configuration and set it to listen to `localhost:9000`. **_Now carry out following configurations and actions:_** Add to `PATH` enviorment variable `%HADOOP_HOME%\etc\hadoop` for excessibility to `hadoop-env.cmd` file. Also add two enviornment variables named `HADOOP_CONF_DIR` and `YARN_CONF_DIR`with values `%HADOOP_HOME%\etc\hadoop` for accessibility to Hadoop configuration files. This will enlist all commands like `start-all.cmd`, `stop-all.cmd`, `start-dfs.cmd`, etc. Add to `PATH` enviorment variable `%HADOOP_HOME%\sbin` which is `C:\APPs\ApacheSuite\Hadoop\sbin`. Setup enviornment variable `HADOOP_HOME` to binary folder `C:\APPs\ApacheSuite\Hadoop` and append `%HADOOP_HOME%\bin` to `PATH`. Get () and extract to folder `C:\APPs\ApacheSuite\Hadoop`.

# Installation

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed